UPSTREAM PR #1184: Feat: Select backend devices via arg#40

UPSTREAM PR #1184: Feat: Select backend devices via arg#40

Conversation

052ebb0 to

76ede2c

Compare

29e8399 to

2d43513

Compare

OverviewAnalysis of stable-diffusion.cpp across 18 commits reveals minimal performance impact from multi-backend device management refactoring. Of 48,425 total functions, 124 were modified (0.26%), 331 added, and 109 removed. Power consumption increased negligibly: build.bin.sd-cli (+0.388%, 479,167→481,028 nJ) and build.bin.sd-server (+0.239%, 512,977→514,202 nJ). Function AnalysisSDContextParams Constructor (both binaries): Response time increased ~40% (+2,816-2,840ns) due to initializing 9 new SDContextParams Destructor (both binaries): Response time increased ~42% (+2,497-2,505ns) from destroying 9 additional string members. One-time cleanup cost outside inference paths. ~StableDiffusionGGML (both binaries): Throughput time increased ~95% (+192ns absolute) managing 7 backend types versus 3, including loop-based cleanup for multiple CLIP backends. Response time impact minimal (+5.2%, ~720ns). ggml_e8m0_to_fp32_half (sd-cli): Response time improved 24% (-36ns), benefiting quantization operations called millions of times during inference. Standard library functions (std::_Rb_tree::begin, std::vector::_S_max_size, std::swap): Showed 76-289% throughput increases due to template instantiation complexity, but absolute changes remain under 220ns in non-critical initialization paths. Additional FindingsAll performance regressions occur in initialization and cleanup phases, not inference hot paths. The architectural changes enable multi-GPU workload distribution, per-component device placement (diffusion, CLIP, VAE on separate devices), and runtime backend flexibility. Quantization improvements and multi-GPU capabilities provide net performance gains during actual inference, far exceeding the microsecond-level initialization overhead. Changes are well-justified architectural improvements with negligible real-world impact. 🔎 Full breakdown: Loci Inspector. |

5bbc590 to

68f62a5

Compare

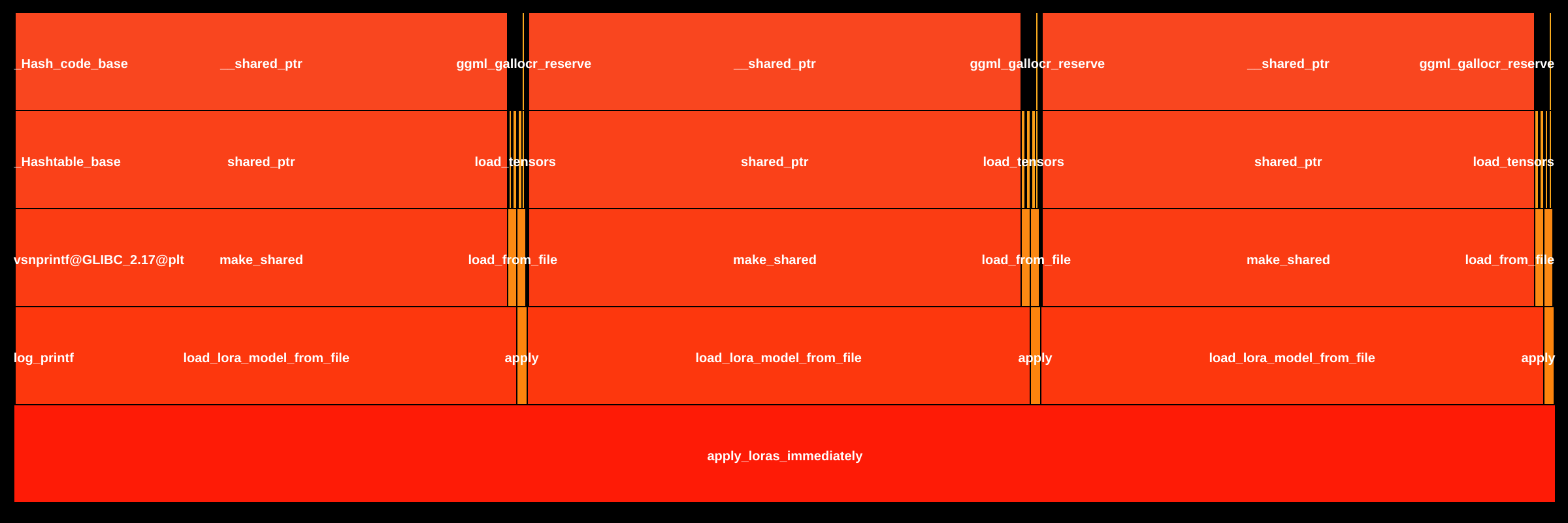

OverviewAnalysis of stable-diffusion.cpp compared 50,147 functions across two binaries: build.bin.sd-cli (power consumption: 491,453 nJ → 494,103 nJ, +0.539%) and build.bin.sd-server (527,149 nJ → 528,268 nJ, +0.212%). Of these, 139 functions were modified, 406 added, 63 removed, and 49,539 unchanged. The target version implements a multi-backend architecture enabling heterogeneous computing (GPU/CPU/RPC) with per-component device allocation, introducing measured performance trade-offs in initialization paths while maintaining minimal overall energy impact. Function Analysisapply_loras_immediately (both binaries): Response time increased from ~10.6ms to ~31.2ms (+191-193%, +20.5ms absolute). Throughput time doubled from ~685ns to ~1,370ns (+100%). The function was refactored to load LoRA models three times with backend-specific filtering (diffusion, CLIP, VAE/TAE) instead of once, enabling component-specific device placement and preventing tensor contamination. This architectural change is justified for multi-GPU support and correctness. neon_compute_fp16_to_fp32 (sd-cli): Response time increased from 85ns to 305ns (+258%, +220ns). Throughput time increased from 77ns to 298ns (+286%). This NEON-optimized FP16→FP32 conversion regression originates from the external GGML library, not application code. The 3.6x slowdown could impact ARM platforms if called frequently during tensor operations. ~StableDiffusionGGML (both binaries): Throughput time increased from 201ns to 393ns (+95%, +192ns). Response time increased from ~13.9ms to ~14.6ms (+5%, +720ns). The destructor now iterates through multiple CLIP backends and includes conditional checks for diffusion_backend and tae_backend to prevent double-free errors, supporting the multi-backend architecture. ~SDContextParams (both binaries): Response time increased from ~5.9ms to ~8.4ms (+42%, +2.5μs). Throughput time increased from 115ns to 166ns (+44%). The compiler-generated destructor now cleans up 9 std::string members for backend device configuration instead of 3 boolean flags, an expected trade-off for flexible device specification. ggml_compute_forward_map_custom2 (sd-server): Response time increased from 110ns to 191ns (+75%, +82ns). Throughput time increased from 95ns to 177ns (+86%). This GGML custom operation likely added backend compatibility checks or precision handling logic for runtime backend management. Standard library functions showed improvements: vector::begin (-68% response time), basic_string::_M_set_length (-42% response time), and vector::_S_max_size (-57% response time), offsetting some architectural overhead through compiler optimizations. Additional FindingsThe multi-backend refactoring enables critical ML deployment capabilities: parallel CLIP encoding across multiple GPUs, heterogeneous execution (CPU text encoding while GPU handles diffusion), and distributed inference via RPC. Performance regressions are concentrated in initialization and configuration paths rather than inference hot paths, with <1% power consumption increase indicating efficient implementation. The NEON regression warrants investigation for ARM-based edge deployments where FP16 quantized models are common. 🔎 Full breakdown: Loci Inspector |

dd19ab8 to

98460a7

Compare

fix sdxl conditionner backends fix sd3 backend display

79f3aa5 to

4fb8901

Compare

OverviewAnalysis of 50,067 functions across 23 commits implementing multi-backend device selection for distributed inference. Modified: 142 functions (0.28%), New: 442, Removed: 63. Binaries analyzed:

Impact: Performance changes are justified architectural improvements enabling multi-GPU support, distributed inference via RPC, and flexible hardware resource allocation for SDXL/SD3/FLUX models. Function Analysis

Standard library regressions:

Other analyzed functions (constructors, lambda operators) showed minimal overhead (<100ns absolute) justified by architectural enhancements. Flame Graph ComparisonFunction: Target version shows three-pass architecture with Additional FindingsML/GPU Operations: Changes enable parallel text encoder execution on separate GPUs (critical for SDXL CLIP-L + CLIP-G, SD3/FLUX multi-encoder architectures), distributed inference via RPC backends, and flexible VAE offloading to reduce GPU memory pressure. Initialization overhead (~21ms) is negligible compared to typical inference time (5-30 seconds per image). Diffusion sampling loops remain unaffected. Standard library regressions ( 🔎 Full breakdown: Loci Inspector |

Note

Source pull request: leejet/stable-diffusion.cpp#1184

The main goal of this PR is to improve user experience in multi-gpu setups, allowing to chose which model part gets sent to which device.

Cli changes:

--main-backend-device [device_name]argument to set the default backend--clip-on-cpu,--vae-on-cpuand--control-net-cpuarguments--clip_backend_device [device_name],--vae-backend-device [device_name],--control-net-backend-device [device_name]arguments--diffusion_backend_device(control the device used for the diffusion/flow models) and the--tae-backend-device--upscaler-backend-device,--photomaker-backend-device, and--vision-backend-device--list-devicesargument to print the list of available ggml devices and exit.--rpcargument to connect to a compatible GGML rpc serverC API changes (stable-diffusion.h):

sd_ctx_params_tstruct.void list_backends_to_buffer(char* buffer, size_t buffer_size)to write the details of the available buffers to a null-terminated char array. Devices are separated by newline characters (\n), and the name and description of the device are separated by\tcharacter.size_t backend_list_size()to get the size of the buffer needed for void list_backends_to_buffervoid add_rpc_device(const char* address);connect to a ggml RPC backend (from llama.cpp)The default device selection should now consistently prioritize discrete GPUs over iGPUs.

For example if you want to run the text encoders on CPU, you'd need to use

--clip_backend_device CPUinstead of--clip-on-cpuTODO:

--lora-apply-mode immediatelywhen clip and diffsion models are running on different (non-cpu) backends.Important: to use RPC, you need to add

-DGGML_RPC=ONto the build. Additionally it requires either sd.cpp to be built with-DSD_USE_SYSTEM_GGMLflag (I haven't tested that one), or the RPC server to be built with-DCMAKE_C_FLAGS="-DGGML_MAX_NAME=128" -DCMAKE_CXX_FLAGS="-DGGML_MAX_NAME=128"(default is 64)Fixes #1116